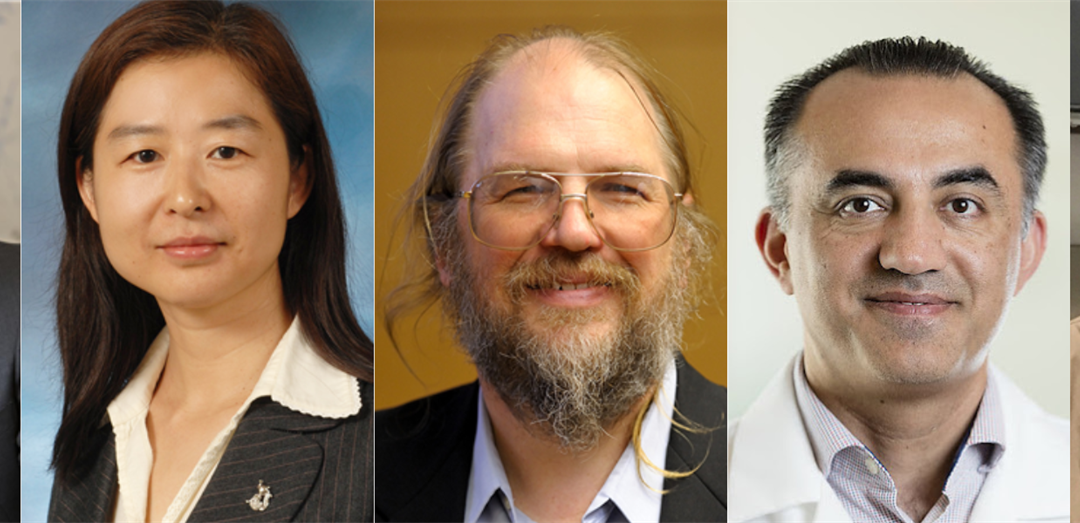

A research team including bioengineering professors Frank Brooks and Hua Li, computer science professor David Forsyth and Bioengineering Department Head Mark Anastasio has been awarded a $1.7 million grant from the National Institutes of Health (NIH) for a project that will estimate the optimal task performance of medical imaging systems. The team also includes Dr. Mohammad Eghtedari from the University of California San Diego.

Modern medical imaging systems consist of complicated hardware and sophisticated computational methods. Given the sheer number of system parameters that impact image quality, the large variety in objects to be imaged, and ethical concerns, the assessment and refinement of emerging imaging technologies in their early stages of development via clinical trials often is impractical. This can hinder emerging technology development efforts. For these reasons, there is great interest in virtual imaging trials that permit the automated simulation and analysis of clinically relevant imaging systems in silico. However, because medical images are often acquired for specific purposes, figures-of-merit that relate to the utility of the images for specific clinical tasks are needed to assess and refine imaging systems. Computing such measures presents significant challenges.

The technologies that team leader Anastasio and his fellow researchers plan to develop will address these challenges and will facilitate the design and refinement of better medical imaging systems overall.

“The broad goal of this grant is not to develop a particular imaging technology, but to develop a generalizable, broad set of computational tools that engineers, physicists, and scientists can use to better design their own imaging systems,” said Anastasio. “The key aspect here is that in order to design a better imaging system, you need to define what ‘better’ means. That’s the tricky part, and is where deep learning will play an important role.”

That’s where the NIH project comes in. Anastasio and his team plan to create deep learning-based tools that will allow imaging technology developers to have surrogates of image quality measures early on in the system design process. Ideally, this will allow for more streamlined, usable feedback early in the technology development phase, rather than relying as heavily on human assessors and radiologists after physical prototypes have been constructed.

“Essentially, it will provide a new capability for engineers and physicists to develop more effective imaging technologies,” said Anastasio. “We hope the end result will be the development of a suite of open source, computational tools that will be made widely available to the imaging community, so other developers can use our tools. And then through computational modeling of their systems, they can better refine and advance them towards ultimate application and utility.”

This project will also open doors to future research, particularly as it intersects with the ever-evolving field of machine learning. Specific deep learning technologies developed in the project will include ambient deep generative models for learning distributions of to-be-imaged object properties from experimental data and advanced classification methods for establishing upper bounds on task performance that can be achieved by use of a given imaging system.

“There’s always going to be room to further advance the tools that we’re developing,” said Anastasio. “We’re going to be taking state-of-the-art machine learning and deep learning technologies and putting them to use to advance the field of imaging science.”

Read the original story here.

Story written by Bethan Owen, Marketing and Communications Coordinator in the Department of Bioengineering.